Unity: Making a simple audio visualization

Posted by Dimitri | May 18th, 2012 | Filed under Programming

As stated in the title, this Unity programming tutorial shows how to create a simple audio visualizer. This post focuses on explaining the necessary requirements in obtaining the audio data from the current music being played, and how to process this data to create a audio visualization. It won’t have detailed explanations on how to create a specific effect for an audio visualization. The code featured below and the example project were created and tested in Unity 3.5.2 .

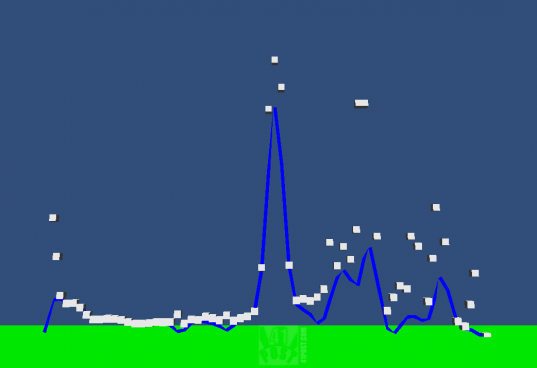

For this post, the audio spectrum data will be displayed as a line using a LineRenderer component and will also feature some cubes that will fall from the top of the waveform, much like the little white bars above the spectrum found on the Windows Media Player “Bars” visualization. However, this visualization will be 3D and not 2D and will render a waveform and not bars.

To achieve that, a script and the following elements will be required:

- An audio file supported by Unity.

- A game object that holds the audio visualization script and a LineRenderer.

- A LineRenderer component attached to the same game object as the audio visualization script.

- A cube prefab.

- A Material for the LineRenderer.

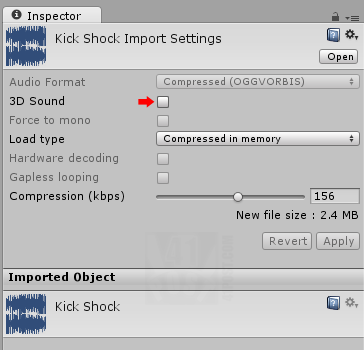

For a list of supported audio files, please refer to this link. After importing the audio file in Unity, select it at the Project Tab and uncheck the 3D sound option. For the game object, create an empty one by selecting GameObject->Create Empty. The position of this game object marks the center of the LineRenderer, so, position it accordingly. Next, attach a LineRenderer component to the recently created game object by selecting it at the Hierarchy tab and then by clicking on Component->Effects->Line Renderer.

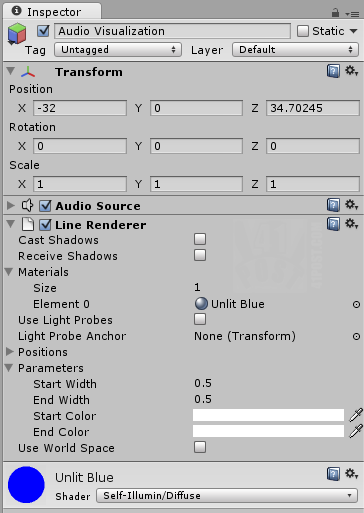

Create a Material and assign it to the LineRenderer. For best results, create a self illuminated diffuse material and set a color to it. Add this material to the LineRenderer component of the Audio Visualization game object. At this point, the audio file import settings and the Audio Visualization game object must be looking like this:

Don’t forget to leave the ‘3D Sound’ option unchecked.

The name of the game object doesn’t matter. This tutorial will reference it as ‘Audio Visualization game object’ from this point on. After adding the LineRenderer and the material, it should be looking like the screenshot above.

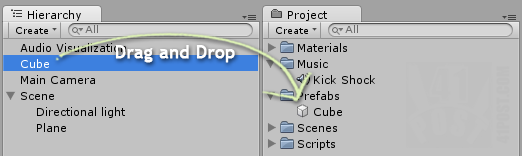

To create a cube prefab, select GameObject->Create Other->Cube. It will add a cube to your scene. Create a new prefab by right clicking anywhere at the Project Tab and select Create->Prefab. Name it Cube. Now, drag and drop the Cube from the Hierarchy Tab on the Cube prefab at the Project tab, like this:

Drag and drop the Cube game object into the prefab.

The only thing missing is the script that manipulates all these elements to create an audio visualization. Just attach it to the Audio Visualization game object. Here’s the code:

using UnityEngine;

using System.Collections;

public class AudioVisualizer : MonoBehaviour

{

//An AudioSource object so the music can be played

private AudioSource aSource;

//A float array that stores the audio samples

public float[] samples = new float[64];

//A renderer that will draw a line at the screen

private LineRenderer lRenderer;

//A reference to the cube prefab

public GameObject cube;

//The transform attached to this game object

private Transform goTransform;

//The position of the current cube. Will also be the position of each point of the line.

private Vector3 cubePos;

//An array that stores the Transforms of all instantiated cubes

private Transform[] cubesTransform;

//The velocity that the cubes will drop

private Vector3 gravity = new Vector3(0.0f,0.25f,0.0f);

void Awake ()

{

//Get and store a reference to the following attached components:

//AudioSource

this.aSource = GetComponent<AudioSource>();

//LineRenderer

this.lRenderer = GetComponent<LineRenderer>();

//Transform

this.goTransform = GetComponent<Transform>();

}

void Start()

{

//The line should have the same number of points as the number of samples

lRenderer.SetVertexCount(samples.Length);

//The cubesTransform array should be initialized with the same length as the samples array

cubesTransform = new Transform[samples.Length];

//Center the audio visualization line at the X axis, according to the samples array length

goTransform.position = new Vector3(-samples.Length/2,goTransform.position.y,goTransform.position.z);

//Create a temporary GameObject, that will serve as a reference to the most recent cloned cube

GameObject tempCube;

//For each sample

for(int i=0; i<samples.Length;i++)

{

//Instantiate a cube placing it at the right side of the previous one

tempCube = (GameObject) Instantiate(cube, new Vector3(goTransform.position.x + i, goTransform.position.y, goTransform.position.z),Quaternion.identity);

//Get the recently instantiated cube Transform component

cubesTransform[i] = tempCube.GetComponent<Transform>();

//Make the cube a child of this game object

cubesTransform[i].parent = goTransform;

}

}

void Update ()

{

//Obtain the samples from the frequency bands of the attached AudioSource

aSource.GetSpectrumData(this.samples,0,FFTWindow.BlackmanHarris);

//For each sample

for(int i=0; i<samples.Length;i++)

{

/*Set the cubePos Vector3 to the same value as the position of the corresponding

* cube. However, set it's Y element according to the current sample.*/

cubePos.Set(cubesTransform[i].position.x, Mathf.Clamp(samples[i]*(50+i*i),0,50), cubesTransform[i].position.z);

//If the new cubePos.y is greater than the current cube position

if(cubePos.y >= cubesTransform[i].position.y)

{

//Set the cube to the new Y position

cubesTransform[i].position = cubePos;

}

else

{

//The spectrum line is below the cube, make it fall

cubesTransform[i].position -= gravity;

}

/*Set the position of each vertex of the line based on the cube position.

* Since this method only takes absolute World space positions, it has

* been subtracted by the current game object position.*/

lRenderer.SetPosition(i, cubePos - goTransform.position);

}

}

}

At the beginning of the script, eight member variables are being declared (lines 7 through 21). The first one is an AudioSource component, which will playback the audio file and act as a handle in the process of obtaining the audio spectrum data. The second one is an array of floats that will store the aforementioned spectrum data from the AudioSource. According to the documentation, the array length should be between 64 and 4096 and all values must be a power of 2. Since it’s public, the float array size can be set through the Inspector. The next member variable is a LineRenderer . As the name suggests, this member variable will act as a handle to the attached LineRenderer component in which the number and position of the points that creates the line can be manipulated.

Next, there is a public GameObject named cube. It must be set in the Inspector after attaching the script. Drag and drop the prefab previously created on the cube slot. Next, there’s a Transform member variable, which holds a reference to the attached game object’s Transform. The final member variables are a Vector3 that stores the calculated cube and line point position based on the audio data; an array of Transform components, that are later used to manipulate the cubes’ positions and a Vector3 that defines the speed in which the cubes fall.

After all the declarations, the Awake() method initializes the AudioSource, LineRenderer and Transform variables with handles to the components attached to the containing game object (lines 23 through 32).

The Start() method contains the other member variables initialization. It sets the number of points on the line to the number of samples from the spectrum data (line 37). It utilizes the same logic to initialize the cubesTransform array and it centers the containing game object at the X axis (line 39). E.g.: if samples.Length is 64, the current game object will be placed at X:-32 .

Finally, it’s time to populate the cubesTransform array. To do that, a local GameObject variable tempCube is created. It holds a temporary reference to the most recently instantiated cube inside the following for loop (line 44).

This loop will instantiate the same number of cubes as the number of audio spectrum samples by calling the Instantiate() method (line 50). This method creates copies of the cube prefab passed as the first parameter, at the specified position and rotation. In this case, all cubes are aligned side by side at the X axis, with no rotation. A reference to the recently instantiated cube is held by tempCube variable.

Still inside this for loop the Transform() of each cube is stored at the cubesTransform array (line 52) and the parent of each cube is set to the game object the script is attached to (line 54). By doing so, the Hierarchy tab doesn’t get flooded by cube game object clones, since they are all going to be placed as a children of the containing game object.

With the variables set up, at the Update() method, the audio spectrum data is obtained by calling the GetSpectrumData() method from the AudioSource object (line 61). It takes three parameters: an array of floats that will store the spectrum data, an integer that defines which channel the audio data is being taken from, and the third parameter is the function that will filter the spectrum data. At the above script, the function used is the FFTWindow.BlackmanHarris , which is the most precise, but the slowest. For more information of this and other spectrum filtering functions, please visit this link.

The for loop inside the Update() reads each one of the obtained spectrum data saved on the samples array and sets it as the Y component of the cubePos Vector3 (line 68). Note that, the X and Z components are being set to the corresponding X and Z positions of the cube. Naturally, the Y position should be samples[i] but instead, it’s Mathf.Clamp(samples[i]*(50+i*i) ,0 ,50 ).

The Mathf.Clamp() method is limiting the spectrum data value between 0 and 50 (second and third parameters). This is important for alignment purposes, because now the maximum height of the audio visualization is defined. Inside the first parameter, the spectrum data value is being multiplied by 50 (the maximum height) added the square of i, due to the fact that samples[i] is always clamped between zero and one. If the Y coordinates of the cubes were to be set to samplers[i], the movement range would be very small.

Also, as the samples progresses, the obtained spectrum data gets smaller and smaller, so that’s why they are being multiplied by the square of i, making the next spectrum sample have a value that is at least the square of previous one. Now that the position of each box and point is know all we have to do is assign it to them. For the boxes, a simple if statement checks whether the box Y position is bigger than the calculated Y position. Case that’s true, the box should fall, otherwise it should be placed at the calculated Y position (lines 71 through 80). For the position of the points that makes the line drawn by the LineRenderer, a simple subtraction is made to compensate the containing game object’s position from cubePos. That’s because the SetPoint() method expects absolute world coordinates (line 85).

That’s it! Here’s a screenshot, followed by a video of the audio visualizer in action:

Screenshot of the audio visualizer featured in the example project.

Downloads

- unityaudiovisualizer.zip

- Song Credits: “Kick Shock” by Kevin MacLeod (incompetech.com). Licensed under Creative Commons “Attribution 3.0”.

you are like a god lol, just what i was looking for thanks for your help

OMG its amazin’.. u r so awesum.. please we need more tuts..like how to make this visualization more attractive like changing colors and size of cubes..

Is it possible to use this with a Microphone?

grreat!

But, with that said, generally, providing you do your research during your initial

apartment search, you’re more likely likely to enjoy your apartment experience without noisy neighbors.

However, an experienced probably have solutions for improving the room

that you will not have considered. This is the

option we would recommend considering that the containers in the kits are

manufactured for Bokashi fermentation specifically and so are just small enough to tuck away in to

a convenient place such like a pantry or under the sink.